In brief

- Microsoft’s MAI-Image-2 is a new state-of-the-art AI image generation model

- The model puts Microsoft in as the third-best AI lab on the Image Arena leaderboard thanks to its strong realism and text rendering.

- Strict filters, usage caps, and missing features currently limit real-world usefulness, however.

Microsoft has been quietly building its own image generator. Announced Thursday by the company's AI Superintelligence team, MAI-Image-2 has already landed at #3 on the Arena.ai leaderboard—behind only the models from Google and OpenAI—making Microsoft a legitimate player in a space it had previously outsourced to its partners.

That last part is worth sitting with. Microsoft has been paying OpenAI billions to power Copilot and Bing Image Creator. Building a competing image model in-house is an interesting business move.

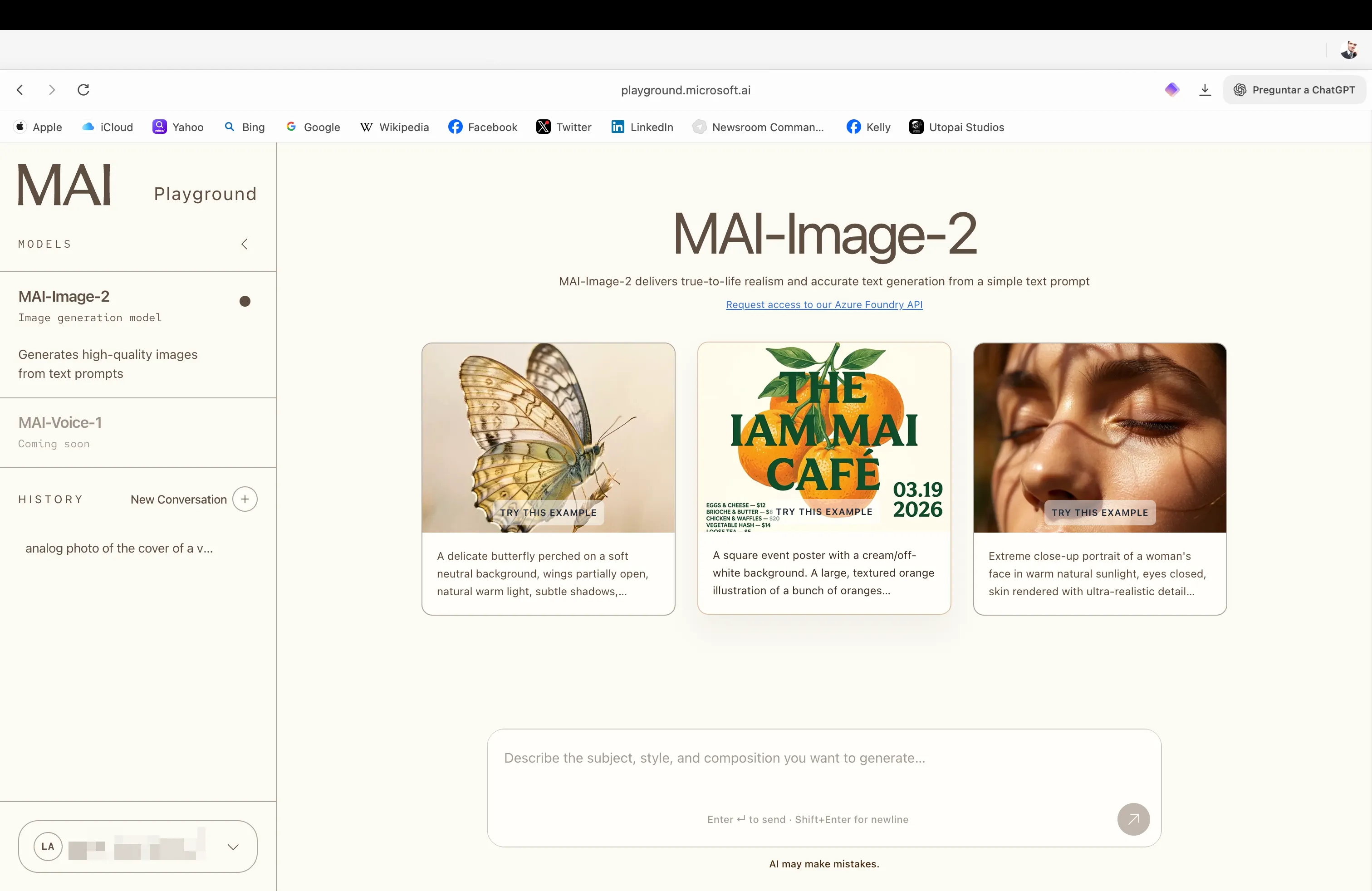

MAI-Image-2 is available now in the MAI Playground, with a gradual rollout to Copilot and Bing Image Creator underway. API access is currently limited to select enterprise customers, with broader availability on Microsoft Foundry coming soon.

The team says it built the model by talking directly to photographers, designers, and visual storytellers. Three things came out of those conversations: improved photorealism, more reliable in-image text generation, and stronger capacity for detailed, imaginative scene construction. Whether or not that process translated into a genuinely useful tool is a different question.

Testing MAI-Image-2

The first thing you notice when you open the MAI Playground is how understated it is. The interface is minimal and clean, visually somewhere between Claude and Hume, with none of the maximalist dashboard energy you get from Midjourney or the chatbot experience you get from Gemini.

The images themselves are genuinely pretty strong. Photorealism is a real strength here—the model has a solid grasp of natural light, surface texture, and spatial relationships. It doesn't quite hit the level of Google’s Nano Banana Pro, which still rules the leaderboard for a reason, but in some realism tests it comes surprisingly close.

Better prompting likely pushes it further; our initial results improved noticeably as we dialed in our descriptions.

Even complex, unrealistic scenes with parameters that defied logic were properly handled by the model, beating other models in details like the body proportions, limb position, depth, and spatial positioning.

For example, this image of a dog riding a bike in the middle of the ocean is arguably the most accurate one we’ve produced in zero-shot tests.

Text generation is a legitimate highlight. MAI-Image-2 handled complex typography with far more consistency than we expected—large blocks of text in images, posters, signage—without the typical garbling you see from most models.

We even pushed it toward multilingual text: It managed to generate some hanzi Chinese characters, though the accuracy wasn't perfect. Still, the fact that it tried and got partway there is notable.

The model understands artistic style well, shifting between photographic realism, graphic design aesthetics, and illustrated styles without much friction. It reads prompts carefully, including stylistic instructions, and delivers something coherent on the other end. For a broad range of visual tasks, it's versatile.

Now for the harder truths.

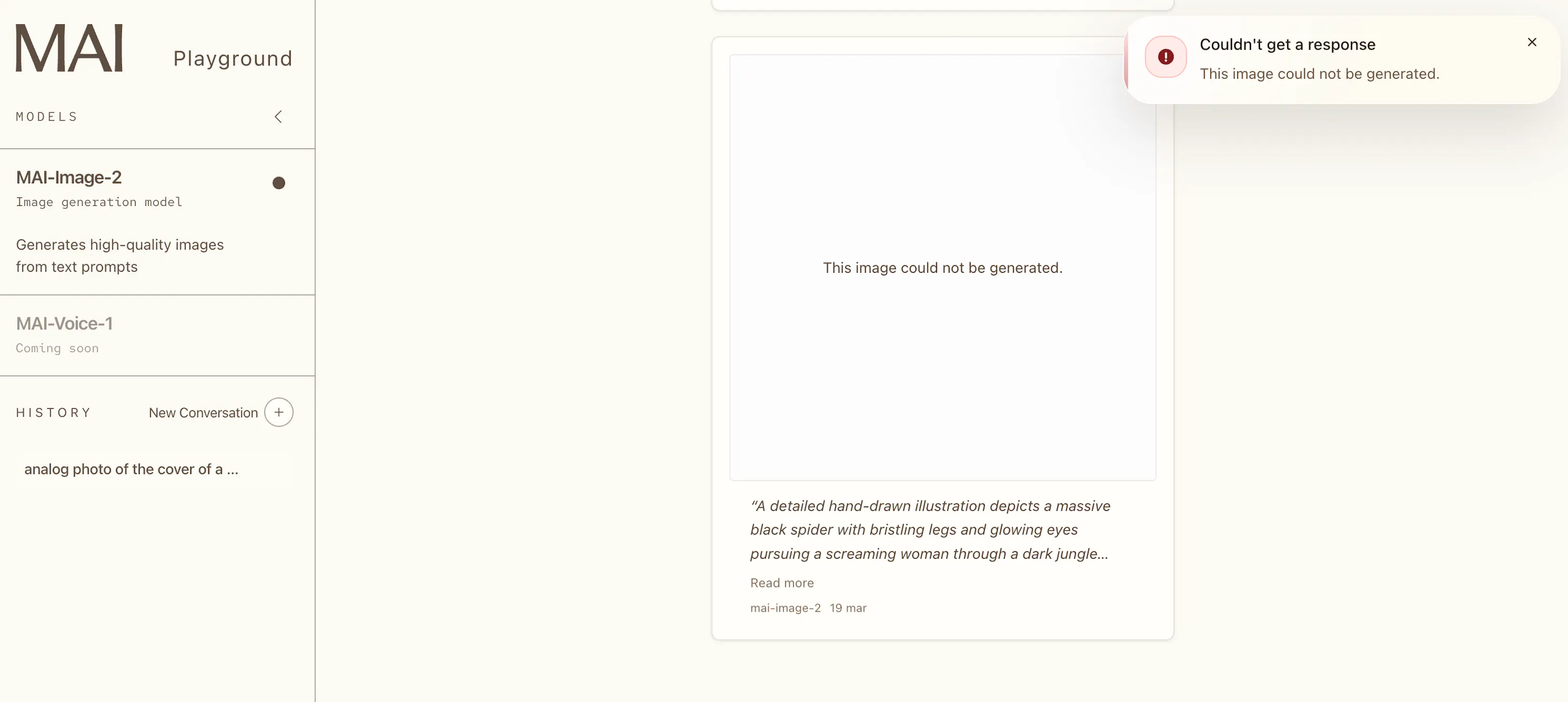

MAI-Image-2 is aggressively filtered—more so than Google Imagen, and more so than OpenAI’s DALL-E. We ran our usual test of a cartoon drawing of a spider chasing a woman, and got a flat refusal. Again, that's a drawing—of a spider. The content moderation here is tuned to a level that will frustrate anyone doing creative work in gray areas, horror illustration, or anything that reads as remotely tense.

The usage limits are equally restrictive. Each generation triggers a 30-second cooldown. After 15 images, you're locked out for 24 hours. For casual experimentation, that's manageable. For any kind of production workflow, it's a dealbreaker in the native UI.

There's also only one resolution: 1:1. No landscape, no portrait, no custom ratios. In 2026, that's a significant limitation—particularly for social media content, which is precisely where Microsoft presumably wants this embedded in Copilot.

And speaking of Copilot: MAI-Image-2 isn't there yet. The rollout is happening, but as of today, the product you'd actually want it in doesn't have it.

One more missing piece: This is purely a text-to-image tool. No image-to-image, no inpainting, no outpainting, no reference image support. For users expecting anything close to Firefly or Midjourney's editing capabilities, this will feel half-finished.

Our take

MAI-Image-2 performs better than its leaderboard ranking suggests. In our hands-on tests, it beat GPT-Image on image quality and text rendering, which is interesting given that GPT-Image sits above it on Arena.ai’s leaderboard. Benchmark positions don't always tell the full story.

The strategic logic behind building this is clear. Microsoft has been licensing OpenAI's image models for Copilot while simultaneously funding OpenAI's biggest competitor, Anthropic. Having a capable in-house model reduces dependency, cuts costs at scale, and gives Microsoft something to iterate on without asking for permission.

From that angle, MAI-Image-2 doesn't need to beat Nano Banana. It just needs to be good enough—and it is.

The problem is the product constraints. The generation caps, the strict content policy, the 1:1-only output, the missing editing features, etc; these are the kinds of limitations that put a ceiling on real-world utility. A model this capable deserves infrastructure that matches it.

MAI-Image-2 is a strong technical foundation hamstrung by conservative product decisions. Once Microsoft loosens the restrictions, this becomes a serious contender. Right now, it's a promising preview of what Microsoft's image stack could actually become.